How to Expertly Use LLMs in Development Workflows

Articles in the A Pragmatic Look at AI (LLMs) in Software Development Workflows Series:

- Part 1: A Pragmatic Look at AI and LLMs in Software Development Workflows

- Part 2: What Even Is “AI”? Defining Key Terms in Plain Language

- Part 3: Why Developers Should – and Shouldn’t – Use LLMs in Our Development

- Part 4: How to Expertly Use LLMs in Development Workflows

- Part 5: How Will LLMs Transform Us? AI as a Tool in the Future of Development

Imagine: You’re about to board a commercial flight. Directly ahead of you in line, you learn, is the person who engineered this very model of plane. It’s equipped with state-of-the-art technology!, this person tells you. It’s able to autonomously fly itself, from takeoff to landing! You chat, shuffling down the jetway, and just before you reach the threshold they say, “You wanna know the wildest part? I don’t even know how any of this works! I just kept mashing parts together and somehow the whole thing stayed up in the air! Enjoy the flight!”

Are you getting on that plane?

Playing with AI vs. Working with AI

There are two ways we use LLMs in our app dev workflow.

In one method, we use LLMs like that plane engineer, mashing parts together. We call this vibe coding.

In another method, which many people are differentiating as agentic coding, we're carefully and painstakingly allowing LLMs to take prescribed roles in our existing development process. We're not committing a line of code unless we understand (1) how the code works, (2) why we’re implementing it, and (3) where it belongs in the architecture of the codebase. And we're more likely to write the code ourselves than to let AI do it; AI is a tool for occasional amplification, not generation of our entire codebase.

To smoothly land our metaphor above: Only agentic coding produces code we’d ever let the public fly in.

Vibe coding generally means prompting an LLM and committing the output without caring too much about what the code looks like. But we can also focus on using this as a style of freeform, iterative, explorative prompting where we can try new and different ideas, chasing inspiration wherever it leads without much (if any) concern for security, scalability, elegance, etc. It’s great for prototyping rapidly, building one-off tools, and developing personal software. But it inevitably produces code that's unfit for client-facing work.

Agentic coding is a more methodical system of structured task delegation. We meticulously plan discrete, well-defined objectives, assign them to the LLM, and then closely review the code—one task a time—before committing any outputs to our codebase. Rather than exploring unknown ideas, we position ourselves as the project leader and lead developer, and the AI agent as a helper, a junior dev. We can use agentic coding as a tool to aid our production of Tighten-level production-ready code, often faster than would be possible manually.

At Tighten, we’re experts in Laravel. It just so happens that Laravel pairs exceptionally well with the kind of agentic coding that’s now possible with LLMs. Why? Laravel’s opinionated defaults, decade+ of stability, and extensive documentation combine to minimize instances where the LLM makes a decision based on expertise it doesn’t have.

“Your AI is only as good as the decisions it doesn’t have to make. Every time an LLM has to choose between competing patterns, pick a library, or figure out how to wire things together. That’s where things can go wrong. … Laravel has built in defaults for almost everything. ... [T]hat’s not a limitation. It’s a superpower”.

Ben Bjurstrom, “The Best Vibecoding Stack for 2026”

Playing around with AI is easy. Figuring out how to work well with LLMs, delivering Tighten-level code, isn't as straightforward. But we didn’t learn agentic coding begrudgingly. We understand the potential of LLM-assisted software development. But we also hold fast to our belief that we must individually and as a team figure out how to leverage LLMs in a way that’s useful, responsible, ethical, and sustainable for ourselves, our teammates, and our clients—and in a way that delivers code we're proud and confident of.

Look: Vibe coding is fun. It’s like playing with Magna-tiles… and also Legos… and also Play-doh and K-nex and superglue and random limbs you’ve snapped off action figures—all at the same time. Spontaneous, structureless, Frankenstein-y fun. Anyone can vibecode, which is the very reason why, when it’s time to do work—especially the type of expert work for which someone is paying you for—agentic coding is the only responsible, ethical, and sustainable way forward.

Agentic Coding: How to expertly use LLMs in your dev workflow

In late 2025, early 2026, agent-driven development of all varieties has exploded. Every week now there's a new AI tool or workflow to consider. AI Maximalists pitch agentic tools as capable of delivering an entirely automated process, an EZ-Bake Oven sort of “set it and forget it” (you'll often hear the term “one-shotting” to describe an attempt to build a production-ready app with a single prompt).

The real magic in agentic coding lies in "structured task delegation": breaking complex projects into discrete, well-defined objectives that you assign to your LLM one at a time. We do it manually, some folks try to build development processes that feed them automatically, but regardless of your system, you want to keep the tasks small and discrete. As we refine our agentic coding process week over week, one truth remains chiseled in stone:

AI can amplify expertise. It can’t replace it.

With that first principle in mind...

A Process for Agentic Coding with LLMs

1. Start with your own expertise: Your brain houses the architecture, security, 6- and 18- and 120-month implications—all of the experiences and skills that undergird and reinforce the decisions you make. AI agents only have the context that you give to them, and what they've been able to consume from the Internet. Trust your domain of knowledge. And be ruthless in forcing your agent to work within your system, not the other way around.

2. Think first: You don't need to build a 40-page project plan, but the speed (and currently low cost) with which LLMs generate code makes it easier than ever before to build features or architecture that don't actually make sense for your project. Make sure you know what you're building, and why, and that it's worth it. Then, break the work you know you have ahead of you into bite-sized pieces. Pick your favorite piece—whether it's the most important, or the piece everything else will build on. And, if it's in the budget, ask it to make a plan first, and evaluate that plan.

3. Execute one task at a time: LLMs thrive with narrower scopes. This is why we value the precision of iterating over the humblebraggadocio of one-shotting. And, while the context windows of many LLM models are getting bigger, they still perform better when the context doesn't get full (basically, when they don't have to hold too much information in their memory at once). A good way to maintain a narrower context window is to clear your context between tasks.

4. Review methodically: Never, ever, ever trust an LLM to review its own work. Even if you have LLMs reviewing each other's work. Be thorough in reviewing an agent’s outputs. This keeps you embedded in the architecture and in control over all the systems planning. Never offload the work of learning how your work works (say that three times fast).

ELI18, a junior dev is an effective short order). This forces an explanation in clear and simple terms.

Once you've gotten familiar with the workflow of working with LLMs, and you know what they're good (and not good) at, you're much safer to work in new technologies. Even so, we still recommend you never commit code you don't understand. Even if the LLM helped you write something you didn't know how to write before, if you commit it, you're responsible for maintaining it; make sure you understand what you're committing.

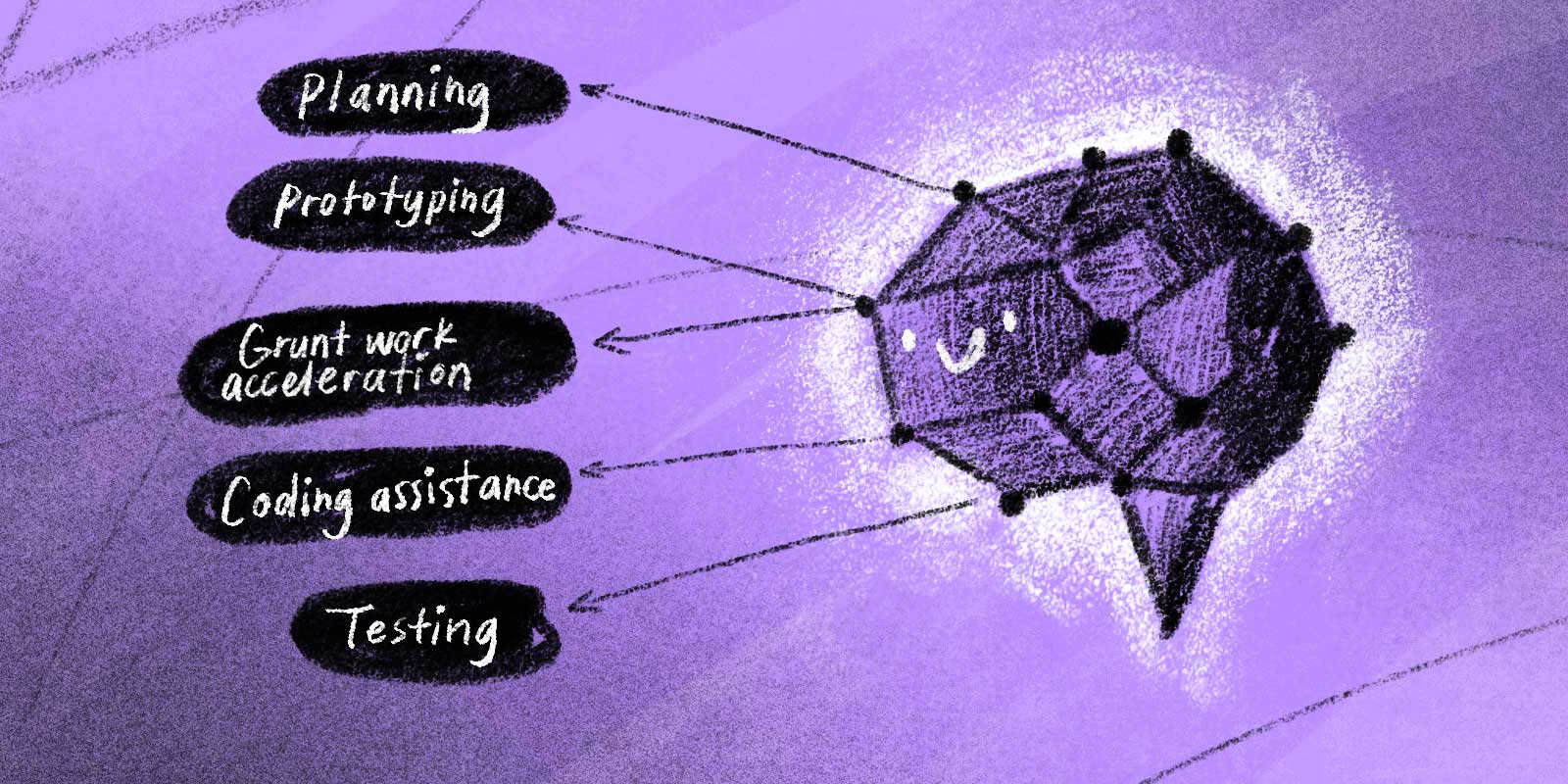

Other Use Cases for AI in Software Development

We surveyed our team to round up other ways they’ve been incorporating AI into their workflows. Here’s what they shared:

1. Planning & Ideation

- Conversation partner for architecture brainstorming

- Getting from “zero to one” (from idea to first prototype)

- Weighing implementation tradeoffs before committing

- Rubber duck debugging

- Initial data model generation

2. Rapid Prototyping & MVPs

- Lightning-fast MVPs/proof-of-concepts

- Cloning open-source projects and conversing with AI agent to grok its structure

- One-off, non-client-facing internal tools (data dashboards, diagnostic utilities, etc.)

3. Accelerating Grunt Work

- Delegating rote or non-creative tasks

- Rewriting lists, reversing key/value pairs, alphabetizing

- Shell/CLI commands (review before running)

- File conversions, test data generation, scripting, image resizing, format transformations

- “Lazy work” assistant while you tackle higher-value tasks

4. Coding Assistance

- Regex generation/debugging (for the brave)

- Pattern replication across components/files

- Auto-fixing PHPStan-type issues

- Boilerplate and boring fundamentals

- Code explanation (

/explainCopilot feature)

5. Testing, Maintenance, and Refactoring

- Examining and improving test coverage

- Error handling and edge-case improvements

- Code reviews for maintainability/consistency

When we use AI without structure and intention, an agentic coding session can morph imperceptibly into freewheeling vibe coding. Over long, unfocused sessions, LLMs quickly lose their context window because each model can only hold so many tokens at a given time. Depending on the model, the LLM will attempt to resolve this resource scarcity by either (1) conserving the original prompt, overlooking the meandering middle, and mashing it together with your most recent input, or (2) cherrypicking from your entire conversation, thus increasing the likelihood of hallucination.

The best practices for agentic coding aren’t really different from what the best practices for being a developer have always been: thinking first, task-by-task execution, methodical reviewing. Every time you let an LLM autocomplete a decision without reviewing it, you’re trading an opportunity to become a better developer. You’re sacrificing learning moments for productivity boosts. Every time you treat an agent like a sentient collaborator rather than a tool, you blur the distinction between your mind (and the unique constellation of expertise it houses) and a pattern-matching hivemind that’s designed to default to the most probable answer.

Writing less code can’t lead to understanding code less. That’s what happens when we use LLMs without intention.

What else happens to our skills over time as we outsource more and more of the work that helps us understand systems deeply? Let’s ponder the future together in the final post of this series: How Will LLMs Transform Us? AI as a Tool in the Future of Development.

in your inbox:

let’s talk.

Thank you!

We appreciate your interest.

We will get right back to you.